|

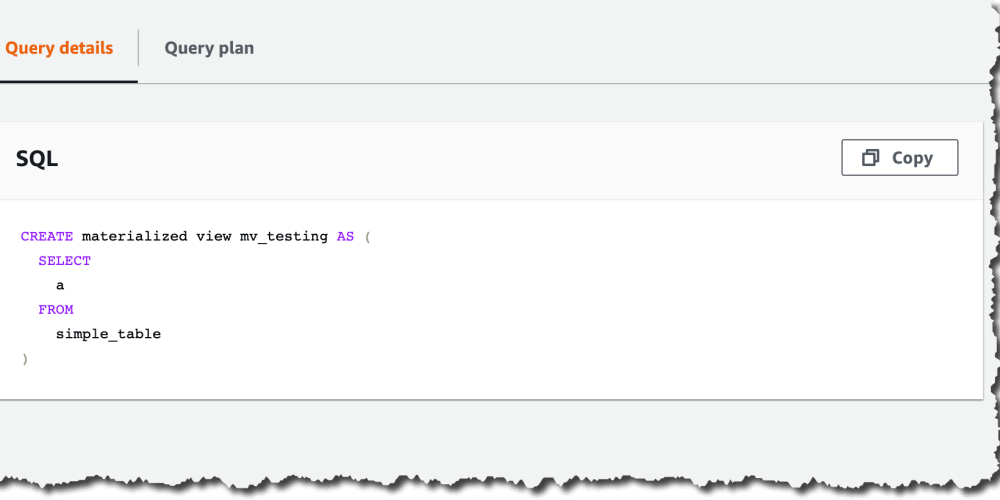

When you develop in dbt Cloud, you can leverage Git to version control your code. You should see these schemas listed under dbtworkshop. If you are on the Classic Query Editor, you might need to input them separately into the UI. You can highlight the statement and then click on Run to run them individually. In your query editor, execute this query below to create the schemas that we will be placing your raw data into. Search for Redshift in the search bar, choose your cluster, and select Query data. Now let’s go back to the Redshift query editor. It should look like this: s3://dbt-data-lake-xxxx. Remember the name of the S3 bucket for later. Drag the three files into the UI and click the Upload button.

If you have multiple S3 buckets, this will be the bucket that was listed under “Workshopbucket” on the Outputs page. Click on the name of the bucket S3 bucket.The bucket will be prefixed with dbt-data-lake. There will be sample data in the bucket already, feel free to ignore it or use it for other modeling exploration. Go to the search bar at the top and type in S3 and click on S3. Now we are going to use the S3 bucket that you created with CloudFormation and upload the files. Download these to your computer to use in the following steps. You can use the following URLs to download these files. The data used in this course is stored as CSVs in a public S3 bucket. S3 buckets are simple and inexpensive way to store data outside of Redshift. Now we are going to load our sample data into the S3 bucket that our Cloudformation template created.

Redshift Query Editor v2 Connect to Redshift Cluster

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed